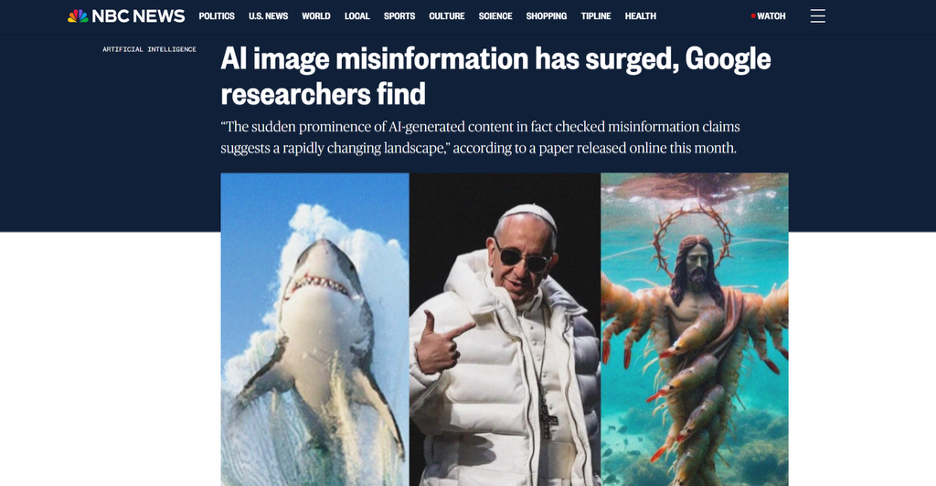

Hunting for AI Images? Metadata Helps, But It Is Not Enough

AI-generated images are now common in social feeds. Some are obvious, but many are hard to distinguish from photographs at a glance. Automated detection is unreliable, so one of the better starting points for verification is the file's metadata.

Earlier AI images often had visible artifacts such as extra fingers and distorted text. Newer models have mostly fixed these, so the next-best signal is a metadata tag written by the generator. One such tag looks like this:

digital_source_type: trainedAlgorithmicMedia

When such tags are present, they are a quick way to confirm an image's origin. The catch is that metadata is easy to remove. A simple screenshot creates a new file with fresh metadata that does not carry any of the original provenance information.

How Generators "Self-Report"

When it comes to honesty about the source of an image, not all AI generators are created equal. Depending on the tool, the clues left behind can vary from full attribution with surprising clarity to partial traces or nothing at all.

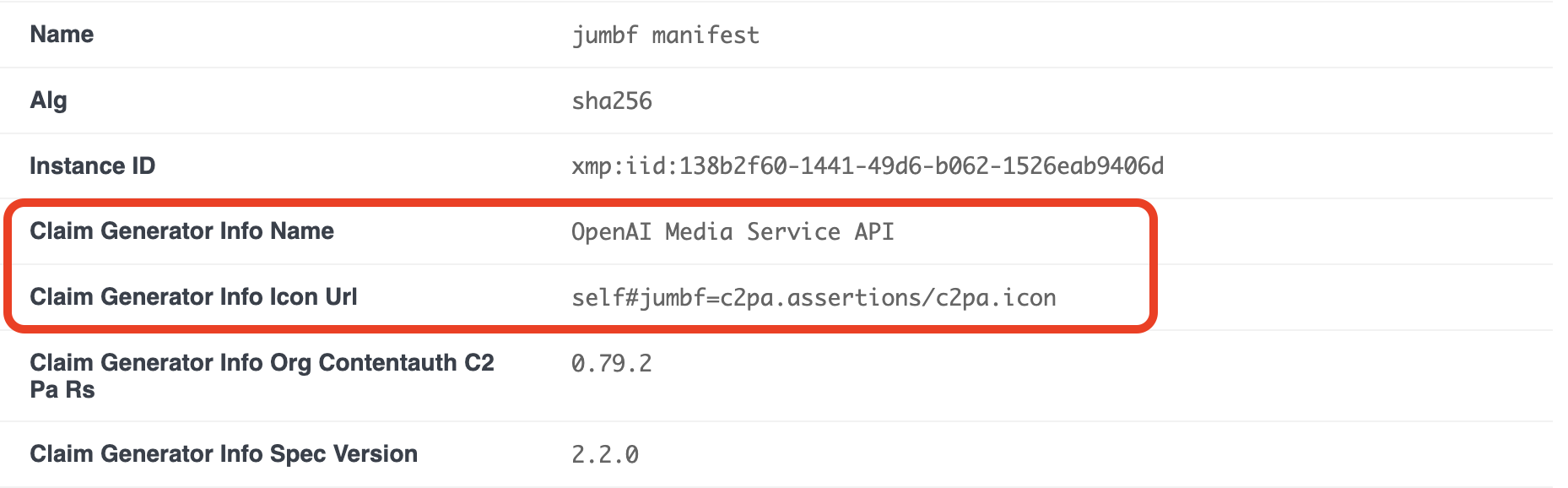

Take DALL-E for example.

Images generated with OpenAI's tools tend to include the standardized IPTC metadata, including the well-known trainedAlgorithmicMedia tag. This effort aims to make AI-generated content identifiable at the file level. OpenAI also deploys C2PA (Content Credentials), which acts like a digital wax seal on a file. These help users know how an image was created and whether it has been altered.

Reading these fields requires a tool that parses XMP and C2PA manifests. ExifTool and browser-based tools like EXIF Viewer both work.

Midjourney has historically been less predictable. For a long time, it functioned like a black box, but that has been changing in recent times, with the use of C2PA.

Newer models align with the industry standard for provenance, but unlike DALL-E , you may need some additional familiarity with how its different models output data.

Then, there's Stable Diffusion, which is starkly different from the others. Since it is an open-source tool, there is no centralized standard for what metadata should be. Instead, what gets embedded in the images depends on the tool used to interact with the underlying model.

If someone uses a popular interface like Automatic1111 or ComfyUI, the prompt, seed, and sampler settings are often included in the tEXt chunks of the PNG file. There is a catch, however, as the models can be run locally. Anyone with some technical skill can strip images of those tags or output files that contain no history.

This matters because closed platforms can enforce disclosure while open systems cannot. So, while finding metadata can be informative, not finding it isn't conclusive.

C2PA as The Industry Standard Verification

The industry's biggest weapon against fake imagery is the C2PA standard (Coalition for Content Provenance and Authenticity). You may also hear it referred to as Content Credentials.

It works much like the "chain of custody" in a police investigation. C2PA is a cryptographic record that travels with the image. It does not simply tell us when an image is AI but also records the image's entire life story:

- Created by: Adobe Firefly

- Edited in: Photoshop

- Exported by: Lightroom

If the file is edited using a tool that is not C2PA-aware, the signature breaks, and the manifest will be reported as invalid. A valid C2PA signature lets a verifier confirm the origin without relying on visual analysis.

The backers include Adobe, Microsoft, Google, Sony, and Leica, and some new cameras can sign photos at the moment of capture.

C2PA has a key limitation: it only helps when it is present. An image without Content Credentials is not automatically suspect, because many normal pipelines (social media re-encoding, format conversion, basic editors) strip the manifest along with other metadata. Until the supporting toolchain is more widely adopted, C2PA is a partial solution.

☞ Tip: When converting between formats (for example, PNG to WebP), use a tool that preserves metadata. Many converters silently strip C2PA manifests during re-encoding.

Why Screenshots Break The System

If metadata is so powerful, how are we still being fooled? The answer comes back to what we mentioned earlier: metadata is easy to erase. The moment you take a screenshot of an AI image, the metadata is gone.

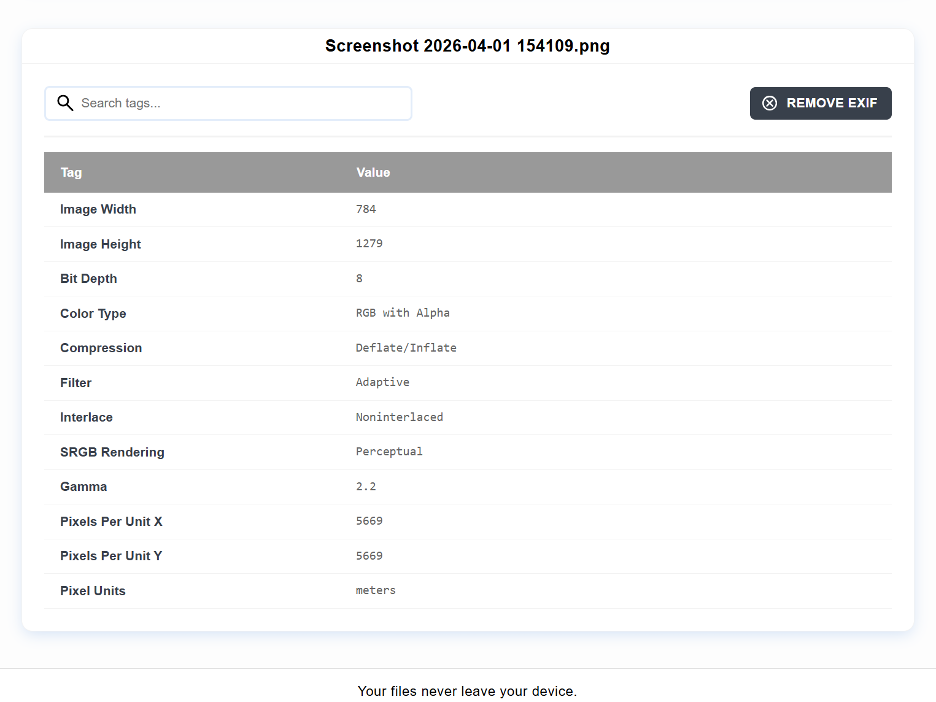

All you get is some data about the photo itself, but nothing about provenance. Here is an example with just 12 tags, where an unaltered photo would have over 200.

You do not capture any of the file's history, just its pixels. Across social media platforms like X and Facebook, this is a common thing users do. Screenshots, by design, strip EXIF data to save bandwidth and protect user privacy, which ultimately helps AI-generated misinformation go viral without being easily noticed.

This phenomenon creates a paradox where the more widely an image spreads, the less verifiable it becomes. In an environment where AI-generated images are made for clicks, that loss of context is not entirely an accident; it's baked in.

Looking Beyond Metadata

When you cannot find metadata, the focus shifts back to analyzing the image itself. However, this is not a return to a simple visual check. It is a more technical form of analysis that relies on finding patterns beneath the surface.

Researchers have found that generative models leave behind subtle statistical traces. These can show up as repeating patterns in frequency or as irregularities in noise distribution and compression. The image may look normal to a human eye, but an analytical tool uncovers more.

Different model architectures leave distinct signatures. In some cases, it is not only possible to detect that an image is fake but also which family of generators spat it out.

There are also tools that use error level analysis, which shows where in image processing has occurred differently compared to what is expected. While originally developed for detecting manipulation, these methods sometimes detect artifacts that come with generating images.

Detection models trained specifically on diffusion-generated images have shown good results in controlled tests. Expectations should be tempered, though. Detection is an arms race: once a reliable signal is identified, new generator models are trained to eliminate it. Techniques that work well on current-generation images may be outdated within a few months, and adversarial methods are being used specifically to hide detectable signatures.

A 5-Step Workflow to Verify Image Authenticity

If you are suspicious of an image, zooming in and squinting might not be enough. Here is a systematic approach you can use as a verification checklist:

- Metadata check - Inspect the file with an EXIF/IPTC viewer. Look for the Software tag and the Digital Source Type field. These catch images that were saved with their provenance tags intact.

- Verify the manifest - If the file has a C2PA signature, check it at contentcredentials.org/verify or with a C2PA-aware tool to see the recorded production chain.

- Forensic Consensus - What's better than one checker giving you the all clear? Several detectors giving you the all clear. Run the image through multiple detectors such as Hive Moderation or Illuminarty to compare what they say.

- Reverse Image Search - If a photo comes attached to something that would be considered a newsworthy event, but you only find it attached to some random account or has no matches on Google Images or TinEye, it is likely a generated product.

- The Anatomy Check - Zoom into the edges of photos. AI still struggles with areas where things intersect. Look where teeth meet lips, where fingers grip an object, or where text shows up in the background. If they are misspelled or just look weird/melty, toss them.

The Limits of Any Single Check

Metadata is a useful clue but it is rarely conclusive on its own. Provenance systems like C2PA are a step in the right direction, but adoption is still partial. Pairing metadata inspection with multiple detection tools, reverse image search, and careful visual checks gives a better overall signal than any single method.

A well-generated image that has been stripped of metadata and shared widely can still pass through most layers of scrutiny. That limitation is unlikely to go away soon.